- Soroco Tops Everest Group’s PEAK Matrix® Assessment - Four Years in a Row

Customer Stories

Ecosystem

Work Graph

A Health Insurer Cut Provider Data Research Time by up to 70% by Giving Their Agents the Context Their SOPs Never Had

The Situation

Industry

Healthcare: Health Plan

Function

Provider Network Operations

Team Size

113 Operations Staff

Deployment

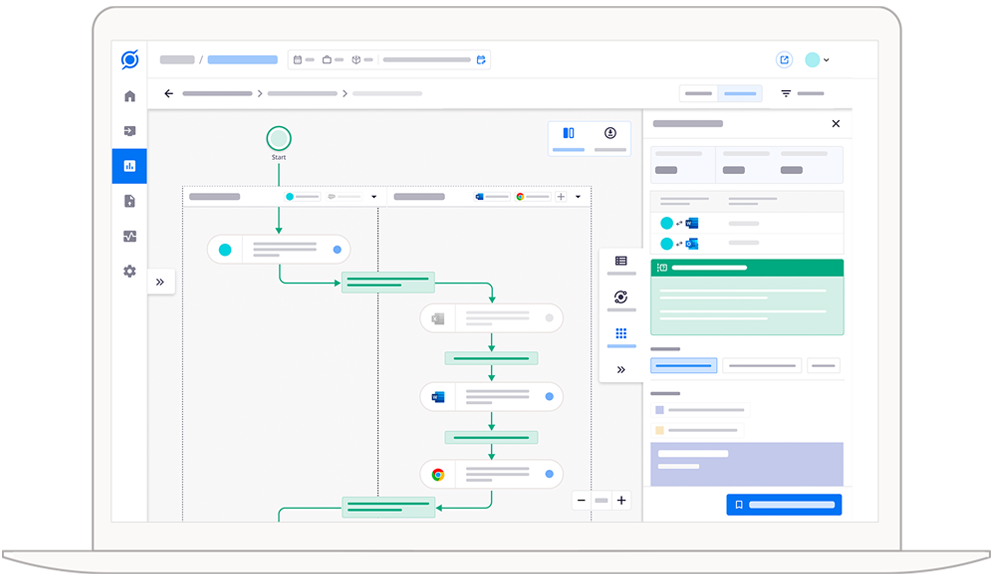

5 Agents · 90-Day implementation

The Trigger

“Are we building automation for the process as it was designed, or as it is actually executed?”

What the Operations Team Found

| Finding | What it meant for operations |

|---|---|

| 76% of all effort sat inside a single step | The Research stage, verifying a provider's identity and reconciling their records before any change is made, consumed the vast majority of all time observed across the Roster Manager role. The SOPs described it in a handful of bullet points. In reality, it was the entire workflow. |

| The team operated across three distinct workflows, not one | Scout identified three substantively different execution paths across the five roles: a coordination role managing intake and research, a validation role performing high-volume data scrubbing, and two loading roles executing the final records update into the network database. Each required a different sequence of systems and handoffs. Only the simplest variant was documented anywhere. |

| A legacy network database was navigated by memory alone | The core network database had no API access. Analysts navigated it across 25 distinct screens using sequences they had learned on the job. 489 navigation interactions were observed and mapped. Before this, that knowledge existed only in people’s heads. Inconsistent procedures across team members on how to navigate the system was one of the stated business challenges going in. |

| Physicians existed under different identifiers in different systems | The same provider appeared under a credentialing number, a contract reference, a claims processing ID, and a case tracking number. Analysts knew which identifiers belonged together. No system linked them. Every cross-referencing decision was made by experienced staff from memory. |

| Manual data transfer was the de facto integration layer | Hundreds of copy-paste events were observed, each one a data transfer between two systems with no technical integration. These were invisible to IT, undocumented in the SOPs, and responsible for a meaningful portion of the error risk in the workflow. |

| Intake was happening across two channels without standardisation | Users spent 93% of their intake effort in Outlook and 7% in SharePoint. There was no standard. Different team members handled the same case type differently depending on how the request arrived. |

| Scrubbing work was invisible to documentation | The validation role spent 50% of its effort scrubbing provider records in Excel, interacting with fields like Medicare Number, Degree, Birthdate, Name, and Gender, before uploading to the next system. This entire stage was undocumented at the field level. No SOP captured which columns mattered, in what order, or why. |

What Changed

The team stopped designing agents from documentation. They designed from observed execution. This shifted the entire automation program. The question moved from “how do we automate the documented steps?” to “how do we build agents that can actually do what our most experienced analysts do?” Those are different questions with different answers.

Routing by role and workflow, not by assumption

Every provider data change is now classified at intake before work begins. Agents receive the correct execution path, whether coordination, validation, or loading, from the start. The single biggest source of inconsistency in the old workflow is removed.

Routing by role and workflow, not by assumption

Every provider data change is now classified at intake before work begins. Agents receive the correct execution path, whether coordination, validation, or loading, from the start. The single biggest source of inconsistency in the old workflow is removed.

The legacy database, made accessible

The 25-screen navigation sequence that experienced analysts had learned over years of handling cases was reconstructed from the 489 navigation interactions Scout observed and codified into executable agent instructions. The network database that had no API and no documentation is now accessible to automation, following the same path an analyst would take.

The legacy database, made accessible

The 25-screen navigation sequence that experienced analysts had learned over years of handling cases was reconstructed from the 489 navigation interactions Scout observed and codified into executable agent instructions. The network database that had no API and no documentation is now accessible to automation, following the same path an analyst would take.

Provider identity resolved using context

The cross-referencing logic that analysts performed from memory, knowing that a credentialing number and a contract reference and a claims ID all referred to the same provider, was derived from the co-occurrence patterns observed in the workflow data. When those identifiers consistently appeared together in the same session, the relationship was captured. Agents now apply that logic systematically, replacing the manual reconciliation step that was the single largest time cost in Research.

Provider identity resolved using context

The cross-referencing logic that analysts performed from memory, knowing that a credentialing number and a contract reference and a claims ID all referred to the same provider, was derived from the co-occurrence patterns observed in the workflow data. When those identifiers consistently appeared together in the same session, the relationship was captured. Agents now apply that logic systematically, replacing the manual reconciliation step that was the single largest time cost in Research.

Intake standardised

A single intake channel is established. Agents handle intake consistently regardless of how requests previously arrived, removing a source of variation that had caused different handling of the same case type.

Intake standardised

A single intake channel is established. Agents handle intake consistently regardless of how requests previously arrived, removing a source of variation that had caused different handling of the same case type.

Scrubbing made explicit

The Excel-based scrubbing logic, which fields to validate, in what sequence, at what level of granularity, was derived from observed behaviour across the validation role and codified as structured agent steps. The undocumented field-level knowledge that previously lived only with experienced analysts is now part of the agent's execution context.

Scrubbing made explicit

The Excel-based scrubbing logic, which fields to validate, in what sequence, at what level of granularity, was derived from observed behaviour across the validation role and codified as structured agent steps. The undocumented field-level knowledge that previously lived only with experienced analysts is now part of the agent's execution context.

Manual data transfers replaced

Each copy-paste event observed during Research was mapped to a specific data dependency between two systems. Those dependencies became structured agent steps, replacing the undocumented manual workarounds the team had been using as their integration layer.

Manual data transfers replaced

Each copy-paste event observed during Research was mapped to a specific data dependency between two systems. Those dependencies became structured agent steps, replacing the undocumented manual workarounds the team had been using as their integration layer.

Calibrated, not just launched

A 52-day calibration phase ran alongside deployment. Operations staff reviewed agent recommendations and flagged overrides. The confidence threshold, the point below which an agent escalates to a human rather than acting, was set from this data, not from assumptions. On day one, the acceptance rate was 58%. After calibration: 89%.

Calibrated, not just launched

A 52-day calibration phase ran alongside deployment. Operations staff reviewed agent recommendations and flagged overrides. The confidence threshold, the point below which an agent escalates to a human rather than acting, was set from this data, not from assumptions. On day one, the acceptance rate was 58%. After calibration: 89%.

The Impact

63%

Reduction in Research cycle time, the stage that accounted for 76% of all workflow effort

50-70%

Projected effort reduction across

the full workflow

3 months --> 4 weeks

Time to complete agent

blueprint discovery

89%

Agent recommendation acceptance rate following calibration, reflecting not just technical accuracy but how closely the agents mirror how the team actually works

Provider data throughput increased significantly without adding headcount

Accuracy improved on the cases most prone to error

The legacy system, now accessible to agents

Institutional knowledge has been captured

The previous automation program’s failure is

now understood

Why This Matters Beyond One Team

See Scout in action.

Schedule your demo now!