- Soroco Tops Everest Group’s PEAK Matrix® Assessment - Four Years in a Row

Customer Stories

Ecosystem

Work Graph

How a Leading Insurer Increased Straight-Through Claim Processing by 32% by Turning Human Expertise into Continuously Improving AI

The Challenge

Why were agents

escalating these cases?

Why were adjudicators

overriding decisions?

Why were these

interventions so frequent?

The result: automation plateaued, manual effort remained high, and the expected ROI from AI remained out of reach.

The Root Cause: Agents Missing Operational Judgment

The gap wasn’t in the models underlying the agents—it was in the operational knowledge they lacked. Real-world claims processing depended on context that lived beyond structured systems: historical provider behaviour, prior claim patterns, adjudicator judgment, and information captured in unstructured formats like email and documents.

None of this context was visible to the agents. More critically, it wasn’t visible to the organization either. Scout revealed the scale of the problem:

41%

of observed claim adjudications required human intervention, with a notable portion involving unplanned adjudicator review

32

18

override clusters explained many repeated adjudicator interventions, often shaped by combinations of claim type, customer type, and claim value band

Agent telemetry and human actions existed in separate silos. There was no unified view of how a claim moved across agents and adjudicators—nor any way to understand the reasoning behind decisions. Human overrides were treated as exceptions, not as signals. Recurring patterns were not systematically captured. And every improvement cycle started from scratch.

Without a way to capture real-world scenarios as structured evaluation data, the organization struggled to validate fixes with confidence. Iteration remained slow, and agents could not systematically learn from production.

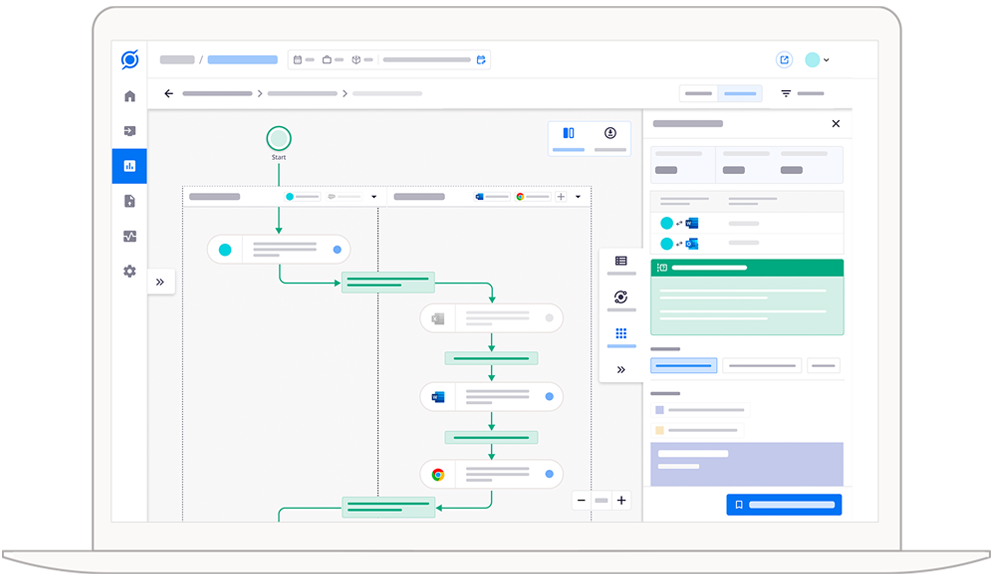

How Scout Delivered a Solution

Unified Human + Agent Observability

High-Fidelity Business Context

Reasoning-Aware Insights

Eval Set Generation from Production Workflows

Scout automatically generated evaluation datasets from real claimzexecutions. Each dataset captured the full context of a case—agent inputs, decisions, and human-corrected outcomes as ground truth. This enabled teams to systematically test improvements, increase coverage of production scenarios handled, and move from anecdotal debugging to data-driven iteration.

Closed-Loop Improvement

Key Insights Uncovered

18 override clusters drove over 60% of repeated manual adjudications, concentrated around specific combinations of claim type, customer segment, and claim value band.

Trusted-provider and repeat-customer scenarios accounted for a significant share of fraud overrides, where adjudicators applied contextual judgment unavailable to agents.

Over a third of intervention cases required context outside core systems, including prior claim history, notes, and exception handling patterns.

A small subset of workflow variants drove a disproportionate share of delays and rework, creating a clear starting point for agent improvement.

The Impact

Recurring manual decisions were systematically identified and automated

Adjudicators spent less time on repetitive cases and more on truly complex scenarios

Agent behaviour became transparent, explainable, and continuously improvable

Results Delivered

32%

28%

3x

Summary

By unifying human and agent workflows—and grounding every decision in real-world context—the insurer moved beyond static automation to a continuously improving system. Human expertise was no longer hidden in overrides and workarounds. It became structured, observable, and reusable at scale.

See Scout in action.

Schedule your demo now!